regularization machine learning mastery

Sometimes one resource is not enough to get you a good understanding of a concept. Regularization is one of the most important concepts of machine learning.

Start Here With Machine Learning

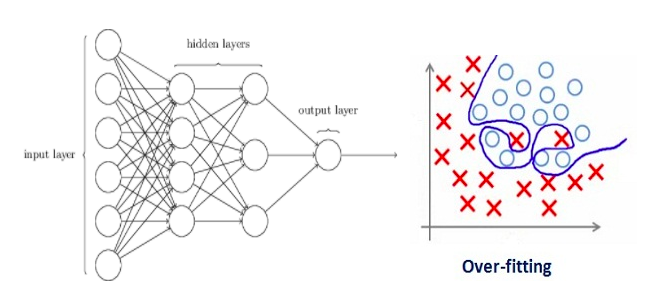

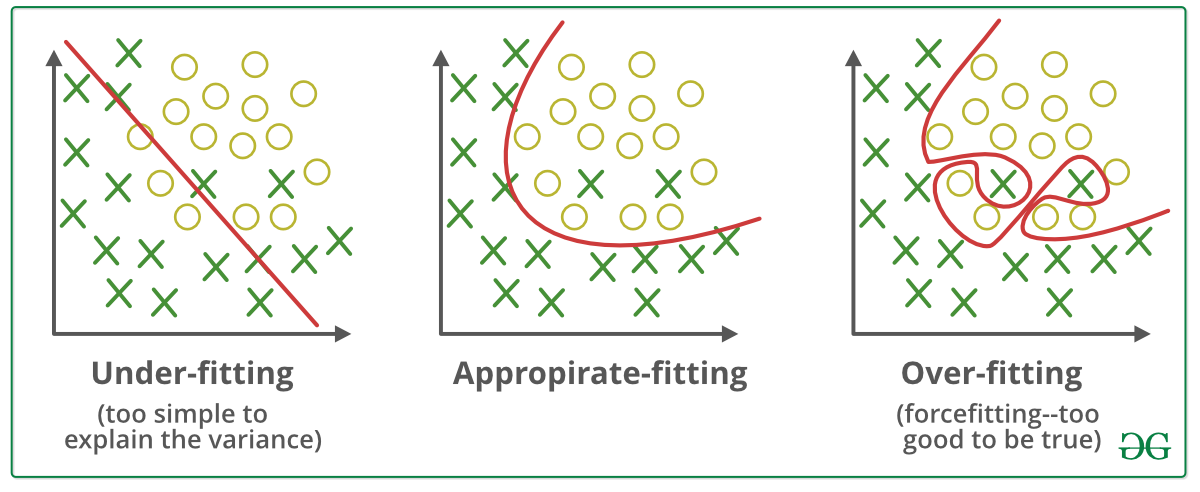

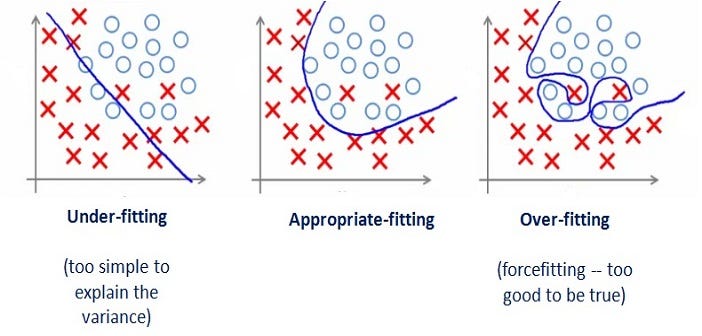

This happens because your model is trying too hard to capture the noise in your training dataset.

. Regularization is a technique used to reduce the errors by fitting the function appropriately on the given training set and avoid overfitting. In their 2014 paper Dropout. Regularized cost function and Gradient Descent.

In simple words regularization discourages learning a more complex or flexible model to prevent overfitting. You should be redirected automatically to target URL. Similarly we always want to build a machine learning model which understands the underlying pattern in the training dataset and develops an input-output relationship that helps in.

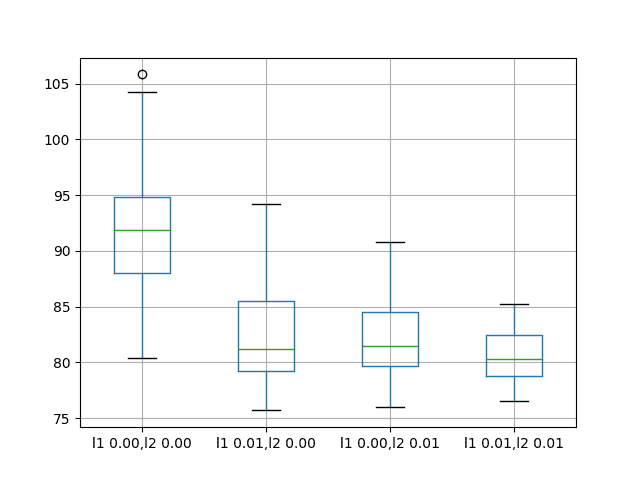

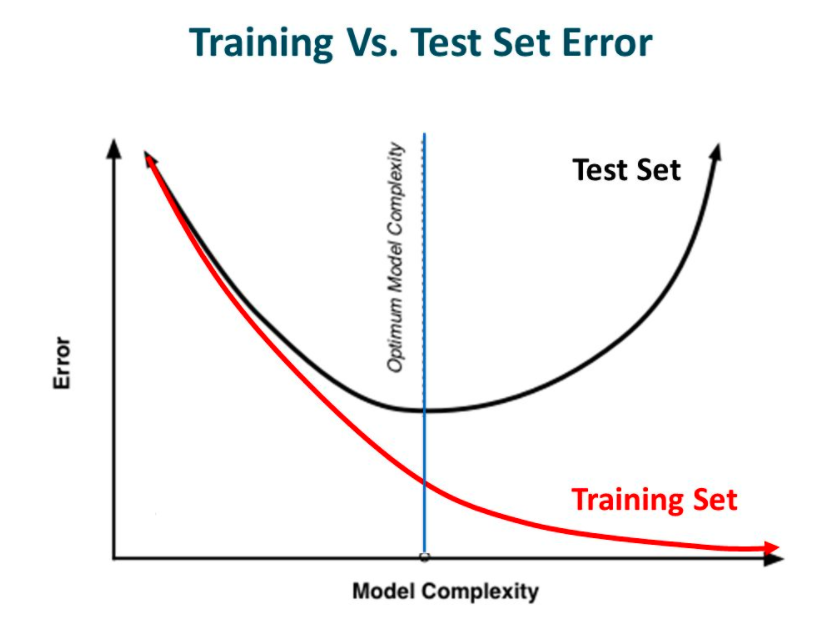

Regularization is used in machine learning as a solution to overfitting by reducing the variance of the ML model under consideration. Among many regularization techniques such as L2 and L1 regularization dropout data augmentation and early stopping we will learn here intuitive differences between L1 and L2. Sometimes the machine learning model performs well with the training data but does not perform well with the test data.

Dropout Regularization For Neural Networks. Regularization in Machine Learning. Regularization in Machine Learning is an important concept and it solves the overfitting problem.

Machine learning involves equipping computers to perform specific tasks without explicit instructions. It is often observed that people get confused in selecting the suitable regularization approach to avoid overfitting while training a machine learning model. L2 regularization or Ridge Regression.

In the context of machine learning regularization is the process which regularizes or shrinks the coefficients towards zero. A regression model which uses L1 Regularization technique is called LASSO Least Absolute Shrinkage and Selection Operator regression. You should be redirected automatically to target URL.

This technique prevents the model from overfitting by adding extra information to it. Regularization in Machine Learning What is Regularization. Equation of general learning model.

The key difference between these two is the penalty term. Part 2 will explain the part of what is regularization and some proofs related to it. L1 regularization or Lasso Regression.

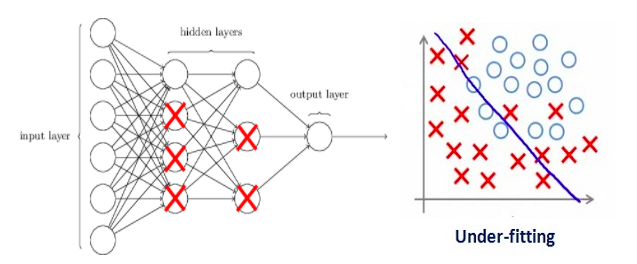

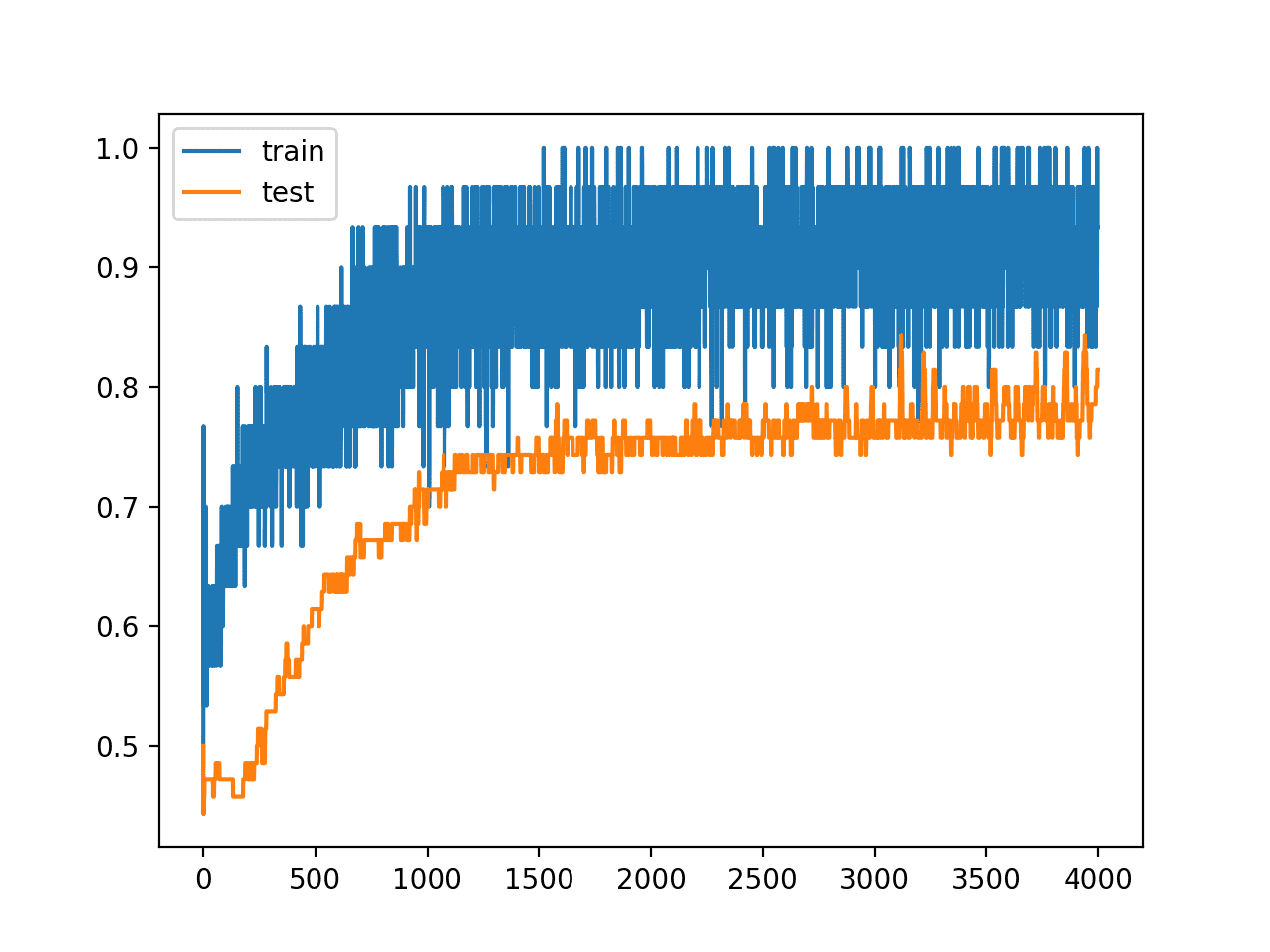

Regularization can be implemented in multiple ways by either modifying the loss function sampling method or the training approach itself. I have covered the entire concept in two parts. Dropout is a technique where randomly selected neurons are ignored during training.

A Simple Way to Prevent Neural Networks from Overfitting download the PDF. The ways to go about it can be different can be measuring a loss function and then iterating over. Part 1 deals with the theory regarding why the regularization came into picture and why we need it.

You can refer to this playlist on Youtube for any queries regarding the math behind the concepts in Machine Learning. It means the model is not able to. The default interpretation of the dropout hyperparameter is the probability of training a given node in a layer where 10 means no dropout and 00 means no outputs from the layer.

If the model is Logistic Regression then the loss is. Regularization is one of the basic and most important concept in the world of Machine Learning. Ridge regression adds squared magnitude of coefficient as penalty term to the loss function.

It is a form of regression that shrinks the coefficient estimates towards zero. One of the major aspects of training your machine learning model is avoiding overfitting. Regularization is essential in machine and deep learning.

By noise we mean the data points that dont really represent. It is not a complicated technique and it simplifies the machine learning process. It is one of the most important concepts of machine learning.

Setting up a machine-learning model is not just about feeding the data. You should be redirected automatically to target URL. A regression model that uses L1 regularization technique is called Lasso Regression and model which uses L2 is called Ridge Regression.

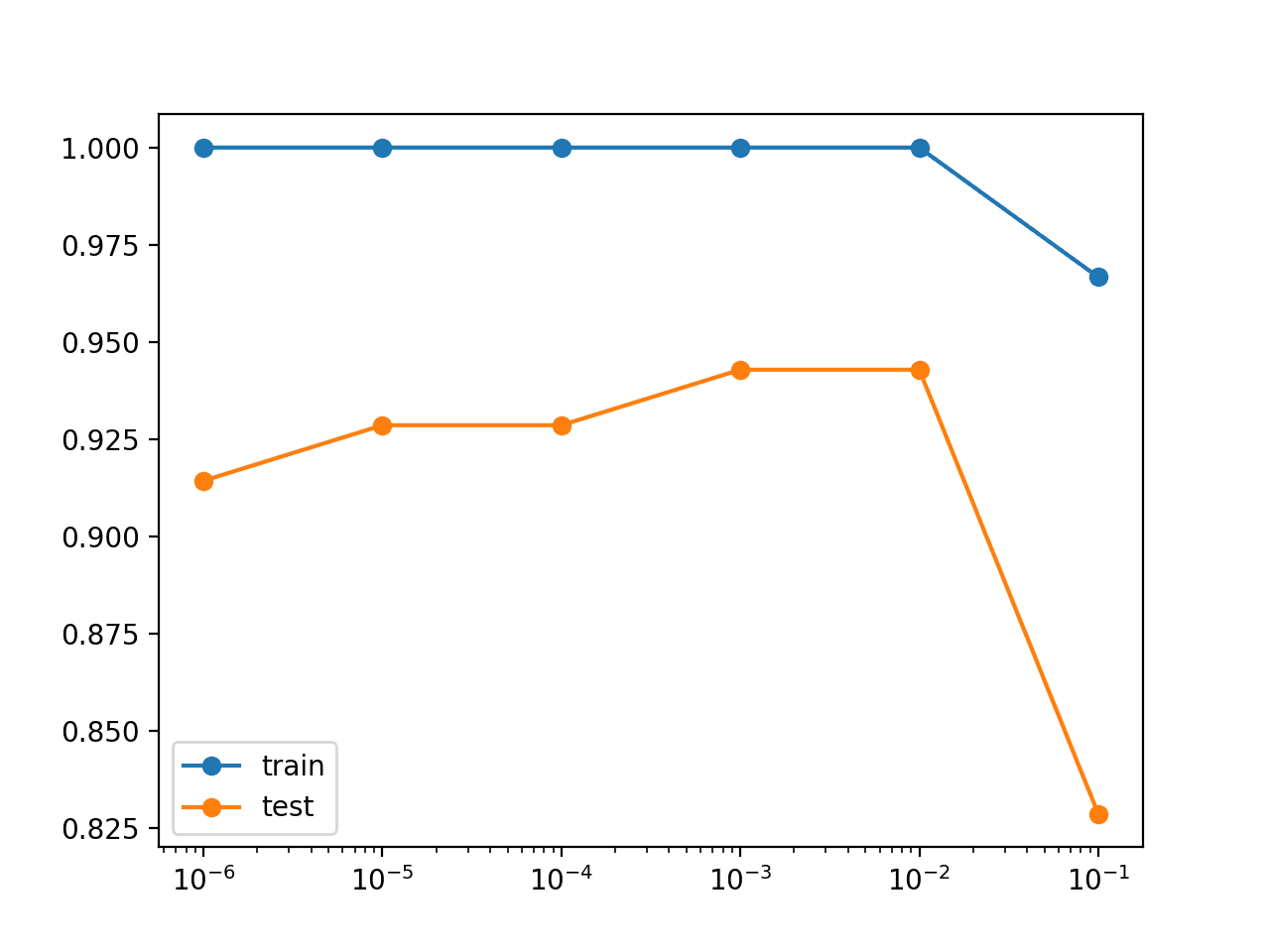

When you are training your model through machine learning with the help of. Using cross-validation to determine the regularization coefficient. This article focus on L1 and L2 regularization.

Input layers use a larger dropout rate such as of 08. Data scientists typically use regularization in machine learning to tune their models in the training process. I have learnt regularization from different sources and I feel learning from different.

The model will have a low accuracy if it is overfitting. By Data Science Team 2 years ago. The cheat sheet below summarizes different regularization methods.

It is very important to understand regularization to train a good model. Regularization helps us predict a Model which helps us tackle the Bias of the training data. A good value for dropout in a hidden layer is between 05 and 08.

In other words this technique forces us not to learn a more complex or flexible model to avoid the problem of. It is a technique to prevent the model from overfitting by adding extra information to it. Optimization function Loss Regularization term.

Concept of regularization. Moving on with this article on Regularization in Machine Learning. Let us understand this concept in detail.

So the systems are programmed to learn and improve from experience automatically. Dropout is a regularization technique for neural network models proposed by Srivastava et al. A regression model.

Day 3 Overfitting Regularization Dropout Pretrained Models Word Embedding Deep Learning With R

Weight Regularization With Lstm Networks For Time Series Forecasting

Deep Learning With Python Jason Brownlee Discount 59 Off Www Vetyvet Com

![]()

Machine Learning Mastery Workshop Enthought Inc

Day 3 Overfitting Regularization Dropout Pretrained Models Word Embedding Deep Learning With R

Deep Learning Garden Page 11 Liping S Machine Learning Computer Vision And Deep Learning Home Resources About Basics Applications And Many More

A Gentle Introduction To Dropout For Regularizing Deep Neural Networks

Weight Regularization With Lstm Networks For Time Series Forecasting

Day 3 Overfitting Regularization Dropout Pretrained Models Word Embedding Deep Learning With R

Regularization In Machine Learning And Deep Learning By Amod Kolwalkar Analytics Vidhya Medium

How To Choose An Evaluation Metric For Imbalanced Classifiers Class Labels Machine Learning Probability

Deep Learning With Python Jason Brownlee Discount 59 Off Www Vetyvet Com

Deep Learning Garden Page 11 Liping S Machine Learning Computer Vision And Deep Learning Home Resources About Basics Applications And Many More

Types Of Machine Learning Algorithms By Ken Hoffman Analytics Vidhya Medium

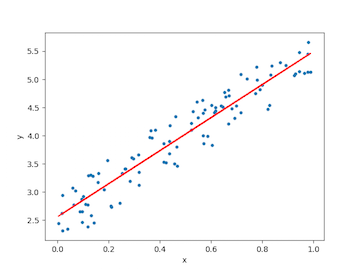

Linear Regression For Machine Learning

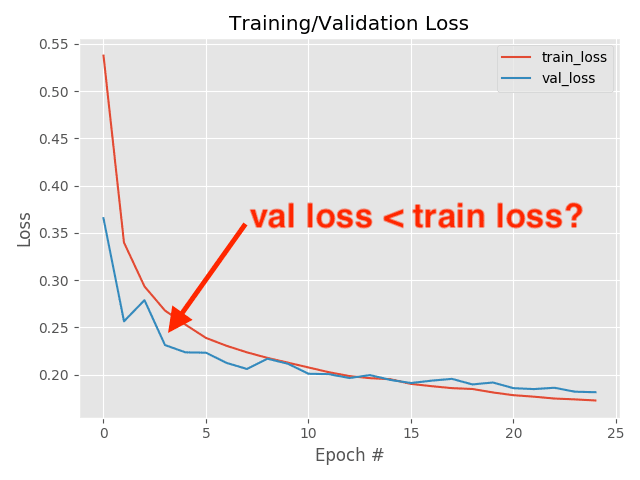

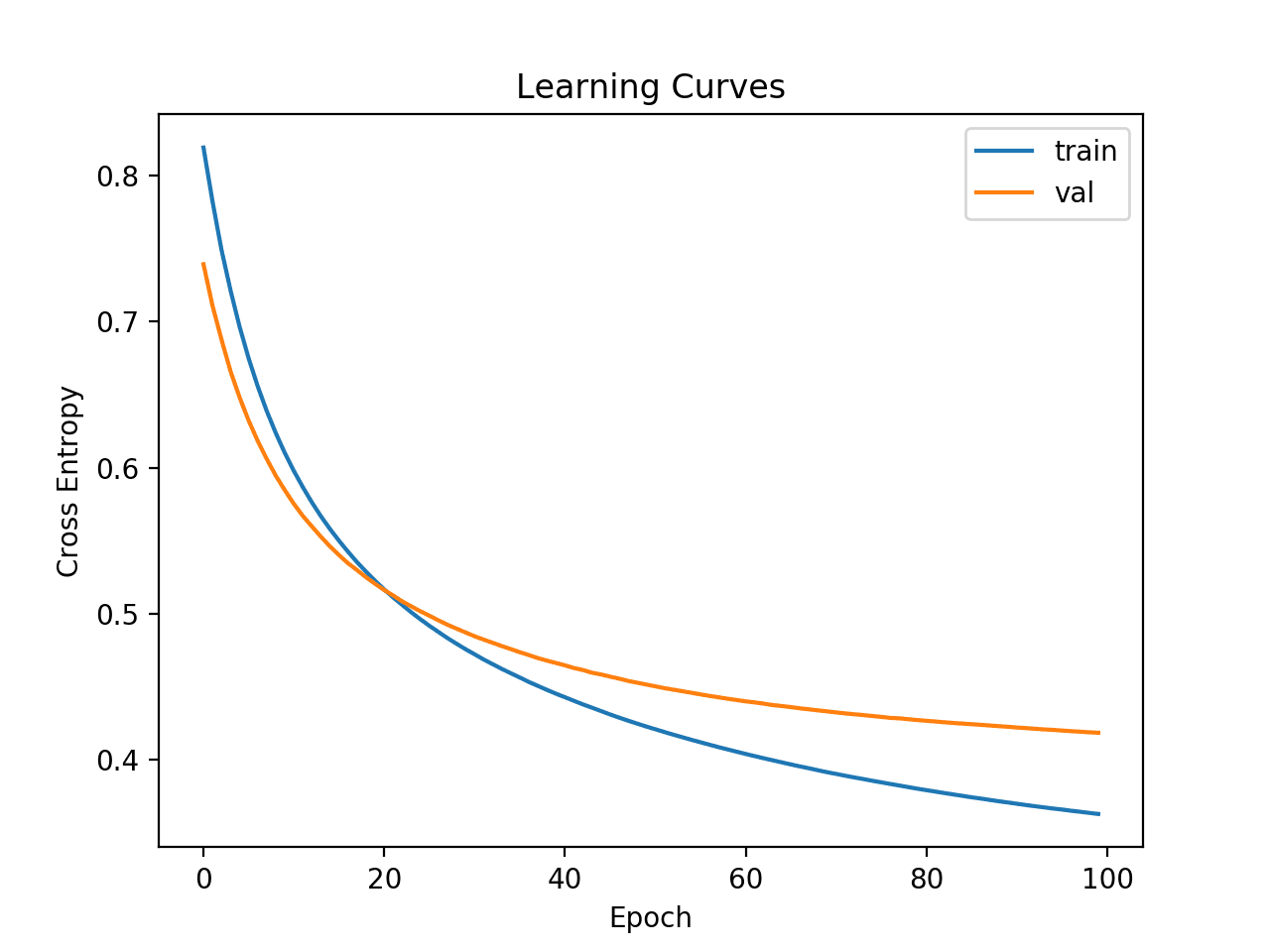

Why Is My Validation Loss Lower Than My Training Loss Pyimagesearch

Chapter 7 Under Fitting Over Fitting And Its Solution By Ashish Patel Ml Research Lab Medium

A Gentle Introduction To Dropout For Regularizing Deep Neural Networks

A Gentle Introduction To Dropout For Regularizing Deep Neural Networks